wire.less.dk

Posted on | January 2, 2019 | No Comments

- 25 years experience in internet technology at large

- 20 years experience in networking at large

- speciality: wireless networking

- Networks for science, IoT, environmental sensing

- air pollution, water monitoring, and more

- LoRaWan, specifically The Things Network

- community-driven data

- Free and open source software

- IT Security

- System administration

- Extensive international experience and networks, predominantly in Africa and Asia

Remarks on Enabling The Things Network for InfluxDB

Posted on | May 22, 2018 | 1 Comment

Two weeks ago i had the pleasure of teaching LoRa, LoRaWan, The Things Network (TTN) at the Joint ICTP-IAEA School on LoRa Enabled Radiation and Environmental Monitoring Sensors.

The only thing unfortunate was the timing that had me miss David G. Simmons’ teaching just a few days later.

While i was showing Node-RED as a tool to get data from The Things Network to an InfluxDB, David presented Enabling The Things Network for InfluxDB.

So, naturally, i had to try that too – configuring Telegraf like so:

[[inputs.mqtt_consumer]]

servers = ["tcp://eu.thethings.network:1883"]

qos = 0

connection_timeout = "30s"

topics = [ "+/devices/+/up" ]

client_id = ""

username = “username"

password = “password"

data_format = "json"

David writes:

A restart of Telegraf and suddenly a ton of data was streaming from their sensors! Of course, you’ll have to use real values for your own username and password.

In fact it is not quite that easy:

1/ Your personal username and password on The Things Network does not give you access to MQTT topics – instead, you will use

the application-ID as username,

and its access key as password.

2/ With that in place, in fact you get a lot of data, but in fact these were all just metadata from the TTN handler, but not the actual sensor data – why?

1526984334188284335 32498 influxus 51456000 867.1 12 3 55.6858 12.5521 0 -63 9.8 2153236443 1 pitlab-ds18b20/devices/itu-pitlab-seb-thingsuno-6/up

1526984339157003551 29102 influxus 51456000 868.3 -26 1 55.65956 12.59067 1 -99 7.8 1694919843 1 pitlab-ds18b20/devices/itu-pitlab-seb-thingsuno-7/up

1526984345832526531 1024 influxus 51456000 868.1 -26 0 55.65956 12.59067 1 -91 9.5 1701652715 30 0 55.65961 12.591463 1 -106 3.75 1703598403 1 pitlab-ds18b20/devices/itu-pitlab-seb-thingsuno-5/up

The MQTT consumer plugin

expects messages in the Telegraf Input Data Formats, such as JSON.

That is however not what a TTN node is sending – unless i specifically tell it to.

Recommended practice is to have a sensor node send as little payload as possible, because airtime and battery power are both precious resources. So, the node should not send redundant unnecessary info (such as a field name) in JSON objects. Instead, it will send just bytes.

If we want Telegraf to read our MQTT topics and understand the actual sensor readings, we have several ways of making that work, and it s actually quite easy:

1/ We can add a Paylaod Converter on TTN to create a json object – note that the handler is the right place to do this, because from here on, additional data do not hurt our network or power budgets anymore.

2/ Alternatively, we could script this in the Influx side.

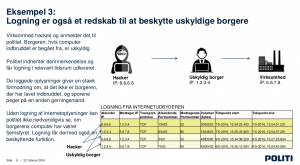

Oops, there goes Presumption of innocence – or, collateral damages of danish internet surveillance plans

Posted on | February 27, 2016 | No Comments

This piece of PR by the danish police is advertising for the benefits of so-called sessionlogging –

surveillance and logging of all internet session to be carried out by internet service providers.

It has already been criticized and ridiculed, for example in Henrik Kramshøjs article here (danish), for a number of factual errors and

unfounded assertions, but also praised for entertaining elements like the IP number of “THE HACKER” being 6.6.6.6

(which happens to be an IP number owned by “DNIC-AS-00768 – Navy Network Information Center (NNIC),US”).

In addition to all this, and likely to go unnoticed, the document also does away with one of the principles of justice:

Presumption of innocence – or, “innocent unless proven guilty”.

In Example 3 we learn that internet surveillance is in the best interest of the citizen, because:

a citizen’s computer may have been used by third parties, to carry out attacks –

a pretty normal thing to happen, in a world of Windows, Malware, Botnets etc.

However, the citizen would not be able to prove her innocence (!), were it not

for the police’s surveillance that comes to the rescue and protects the citizen, by providing data of how the citizen’s computer has been used by the Hacker. Translation of the central argument:

“Without logging of Internet information, the police can not necessarily see whether

the citizen’s computer has been remote controlled. Logging therefore has a protective function.”

And voilá – as a little colateral damage of the technical debate –

there goes ‘Presumption of innocence’.

The citizen now has to prove her innocence – and should be grateful for being under surveillance.

As a side note, we should study whether surveillance on a global level now has to be seen in a very new light …

Building the PiCloud – RaspberryPi & ownCloud

Posted on | February 18, 2016 | No Comments

Why give your data to the big cloud (= someone else’s computer), when you can have the fun (and the control) yourself?

Learn basic Linux, Raspberry Pi and ownCloud setup in this fun workshop,

compiled for an event supported by IDA at the PITLab/ITU.

Materials are shared here – if you run your own workshop, it would be great to know,

and if you are interested in us doing one for/with you, pls contact us!

PiCloud Guide PDF

PiCloud – Building ownCloud on a Raspberry Pi by Sebastian Büttrich is licensed under a Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International License.

Huawei brings 1Gbps internet to danish households

Posted on | February 10, 2016 | No Comments

While this is not really a political item, just a technology decision: it is however remarkable, against the current backdrop of world affairs, cyberwars, etc – Huawei Partnership Brings 1 Gigabit Speeds Across Denmark

Non-free basics, half the net, bread vs cake, and more

Posted on | February 9, 2016 | No Comments

My NSRC colleague Steve Song has this excellent write-up Resolving the Free Basics Paradox on the Indian governments decision to effectively ban Facebook’s “free” internet offering.

I will only add two aspects – slightly underrepresented, imho – to the discussion:

1/ Not Free

Nothing new here: It is commonplace knowledge by now that “free” of course is not “free” – money/value is flowing from user to company. So even in this case, we should be careful not to call Facebook’s offering something which it is not – it is not free, and it is not internet.

2/ All of it? Not quite.

The other addition is the global context of Facebook’s fight for India – when stating that “Facebook had already won the Internet” (which i find largely to be true) we should add: “won that part of the Internet in which US companies dominate”. There is another half, and it is growing into the most promising future markets (Africa) – and India is right on its doorstep.

This is one motivation for Facebook to throw millions into marketing campaigns for their “free internet”. As it may turn out that they are not the only ones who can “connect people”.

The more they become a household commodity, the more they become obsolete and replaceable – the ultimate consequence of the simple fact stated above: it is the user who is the value, not the platform as such.

With those two remarks, i can then only agree with the conclusion: in the name of real free internet, low speed basic free access is the way forward. With freedom of choice about where you put your value.

p.s. over at ICTWorks, the “bread vs. cake” picture is chosen to voice anger about the India decision.

Let us stay in that picture for a moment:

so, Facebook throws out some breadcrumbs, not to feed anyone’s hunger (if it was that they are interested in – well, just give free internet access) but to attract more people to their buffet. Mind you, a buffet where the visitor brings the food – their data, their profiles.

Facebook is an ad and profile agency, running a social network as a tool to get to these profiles. Their product are profiles, so they want more of them. Fair enough. That is not more evil as any other profile business.

But if you are in that business, you have to accept that you are not alone in there – there are others, and there are markets who will take the freedom of saying “no” to your breadcrumbs. Others might offer better food, and ultimately, it will be the visitors who choose their buffet.

Facts about the new Windows 10 (non-)privacy statements and services agreements

Posted on | July 30, 2015 | No Comments

Since Microsofts new privacy statements and services agreements are a total of (hard-to-understand) 45 pages,

here is a little extract of the most important things.

This extract is not original research, just a compilation of things found in the root texts and sources quoted below.

Microsoft basically grants itself very broad rights to collect everything you do,

say and write with and on your devices in order to sell more targeted advertising or to sell your data to third parties.

The company appears to be granting itself the right to share your data either with your consent “or as necessary”.

Sync by default

Microsoft will sync settings and data by default with its servers.

This includes your browser history, favorites and the websites you currently have open

as well as saved app, website and mobile hotspot passwords and Wi-Fi network names and passwords.

This is pretty much like how Google Chrome sync works,

however, if you are not comfortable with sharing your usage habits you can deactivate it from settings.

Your content

“When you share Your Content with other people, you expressly agree that anyone you’ve shared Your Content with may,

for free and worldwide, use, save, record, reproduce, transmit, display, communicate (and on HealthVault delete) Your Content. ”

“To the extent necessary to provide the Services to you and others (which may include changing the size, shape or format of

Your Content to better store or display it to you), to protect you and the Services and to improve Microsoft products and services,

you grant Microsoft a worldwide and royalty free intellectual property licence to use Your Content, for example,

to make copies of, retain, transmit, reformat, distribute via communication tools and display Your Content on the Services.”

Cortana, the personal assistant

“To enable Cortana to provide personalized experiences and relevant suggestions, Microsoft collects and uses various types of data,

such as your device location, data from your calendar, the apps you use, data from your emails and text messages,

who you call, your contacts and how often you interact with them on your device.

Cortana also learns about you by collecting data about how you use your device and other Microsoft services,

such as your music, alarm settings, whether the lock screen is on, what you view and purchase,

your browse and Bing search history, and more.””

“we collect your voice input, as well your name and nickname,

your recent calendar events and the names of the people in your appointments,

and information about your contacts including names and nicknames.”

Advertising ID

Windows 10 generates a unique advertising ID for each user on each device.

That can be used by developers and ad networks to profile you and serve commercial content.

Like data sync, you can turn this off in the Setting menu > Privacy> general > Change privacy option

Microsoft obtains your Encryption key

When device encryption is turned on,

Windows 10 automatically encrypts the drive its installed on and generates a BitLocker recovery key which is backed up to your OneDrive account.

Microsoft WILL disclose your data – in “good faith”

“We will access, disclose and preserve personal data, including your content (such as the content of your emails, other private communications or files in private folders),

when we have a good faith belief that doing so is necessary to protect our customers or enforce the terms governing the use of the services.”

WiFi Sense

Activated by default, WiFi Sense lets you share Wi-Fi network access with your Facebook, Outlook.com, and Skype contacts.

It works in the background, automatically sharing networks you choose to share and downloading credentials for Wi-Fi networks your contacts have shared with you.

The claim is that the sharing stops to function the moment you stop WiFi Sense or stop sharing a certain network.

Note that this is in conflict with their general Content rules that say “once shared, always shared”.

It is also claimed that this will not work for 802.1x protected networks.

Both limitations, with regard to time and type of authentication, are clearly merely imposed by the client.

From a technical point of view, any type of access info may be shared, and may remain shared for unlimited time.

Note that Microsoft admits that when a sharing is revoked, it may take several days (!) for that revocation to come into effect.

This demonstrates the technical issues with the sharing model.

Sources:

Microsoft Privacy Statement

https://www.microsoft.com/en-us/privacystatement/default.aspx

Microsoft Services Agreement

https://www.microsoft.com/en-gb/servicesagreement/default.aspx

http://www.techworm.net/2015/07/by-downloading-windows-10-you-are-allowing-microsoft-to-spy-on-you.html

https://edri.org/microsofts-new-small-print-how-your-personal-data-abused/

http://www.version2.dk/artikel/saadan-virker-omstridt-wifi-deling-i-windows-10-300991

http://www.theregister.co.uk/2015/06/30/windows_10_wi_fi_sense/

https://news.ycombinator.com/item?id=9750797

The recent PGP/GnuPG debate – some thoughts

Posted on | April 17, 2015 | No Comments

It is a bit ironic:

In the wake of the Snowden revelations, only a very few security and encryption technologies were found to still be trusted and uncrackable

(from all we know today).

One of these technologies is

PGP

Side note: The terms “OpenPGP”, “PGP”, and “GnuPG / GPG” are often used interchangeably. This is a common mistake, since they are distinctly different.

OpenPGP is technically a proposed standard, although it is widely used. OpenPGP is not a program, and shouldn’t be referred to as such.

PGP and GnuPG are computer programs that implement the OpenPGP standard.

PGP is an acronym for Pretty Good Privacy, a computer program which provides cryptographic privacy and authentication. For more information, see the Wikipedia article.

GnuPG is an acronym for Gnu Privacy Guard, another computer program which provides cryptographic privacy and authentication. For further information on GnuPG, see the Wikipedia article.

Now, the very moment PGP etc is identified as one of the only feasible approaches to “privacy for the common user”,

it is gets under heavy fire, from various sides.

(Some sources for further reading at the bottom of this article)

The criticism mostly targets the follwoing areas

1/ quality/beauty of core code / protocol

2/ usability issues

3/ key management

4/ social / cultural issues

(where 2/ & 3/ might be seen as the same)

2/ usability issues

mainly points at the lack of easy-to-use implementations,

causing users to make grave mistakes like sending their private key out, instead of their public key

(though i would argue that there has been great progress).

An Einsteinism is due, though:

“make things as easy as possible, but dont make them more simple than

they are.”

In the context of online privacy, Clay Shirky might be wrong when he says:

“Communications tools don’t get socially interesting until they get

technologically boring. The invention of a tool doesn’t create change; it

has to have been around enough that most of society is using it. It’s when

a technology becomes normal, then ubiquitous, and finally so pervasive as

to become invisible, that profound changes happen.”

No level of usablity will get you around the fact that

privacy is a conscious act:

it can (and should) be made easier, but not invisible.

If you don’t get the key idea, you can’t have a locked house.

If you don’t care enough for encryption to keep your key in a safe place,

It will never work for you, no matter how well you have designed your round corners.

If you expect some service out there to make things easier for you, well

then obviously, privacy is not for you.

Tools that allegedly aim at improving the user friendliness of PGP,

e.g. https://www.mailvelope.com/

often do so at the expense of further corroding the very principle of end-to-end security,

by exposing private keys to browsers (known as insecure) or, worse, your webmail provider.

Tools that bring high quality security to mobile messaging (like TextSecure) do so on operating systems

that are so insecure in themselves that even the suggestion of privacy is misleading.

(It should be noted though that placing trust somewhere might be a viable strategy –

just not an ultimately private one).

3/ key management

problems are best illustrated by what all PGP users experience frequently:

“oh could you send me that unencrypted? i ve changed computer and dont have my key anymore”

“ooooh thats not REALLY my key anymore …”

“ooh … which of my 13 keys DID you use?”

4/ social / cultural issues –

it is true to say that after decades of existence, PGP still has not been able to truly break into the mainstream,

and its user group tends to be nerdy.

PGP is not cool – but it has to be added, for many users email is no longer the preferred choice of communications. Email is not cool.

Even at IT and technical universities, the use of mail clients is far from natural,

and (inherently insecure) webmail, FB messaging, WhatsApp, Kik etc etc is often the norm.

Even SMS – once thought to be THE universal messaging standard –

has been overtaken by WhatsApp –

taking mobile messages into the Facebook platform,

and adding to a surveillance system impressive in its all-inclusiveness.

Further reading:

An illustration of why OpenPGP isn’t mainstream yet: PGP best practices

https://help.riseup.net/en/gpg-best-practices

The BIG SEVEN problems with security

https://leap.se/en/docs/tech/hard-problems

Moxie Marlinspike’s (of Whispersystems/TextSecure) attack on GPG

http://www.thoughtcrime.org/blog/gpg-and-me/

A rebuttal: GPG Criticism Reaches New Low As Use Cases Expand

http://qntra.net/2015/02/gpg-criticism-reaches-new-low-as-use-cases-expand/

Raspberry Pi filesharing server with ownCloud – [IPv6 enabled::!]

Posted on | March 19, 2015 | No Comments

(On the occasion of the IPv6 sessions at http://wireless.ictp.it/school_2015/ – if you are interested in a workshop on this, please contact us!)

How to set up a Raspberry PI, IPv6 enabled filesharing server with ownCloud

1/ Install Ubuntu or Debian or similar

on a Raspberry PI – a PI2 is best.

help on installation is at: http://raspberry.org

2/ USB stick: plug in and mount

On the command line of you Pi, do the following

#cd /dev

and then plug in a USB stick and see what device name it shows up as.

typically it is

/dev/sda1

or similar.

if in doubt, take USB stick out,

#ls /dev

and plug in again, until you see the device name.

you can also do a

#grep sd /var/log/kern.log

or

#grep usb /var/log/kern.log

to help you find it.

in what follows, we assume it is

/dev/sda1

#mkdir /media/usbstick

and mount it:

#mount -t vfat -o rw /dev/sda1 /media/usbstick/

3/ ownCloud

install ownCloud:

#cd /home/<your username>

#sh -c “echo ‘deb http://download.opensuse.org/repositories/isv:/ownCloud:/community/xUbuntu_14.04/ /’ >> /etc/apt/sources.list.d/owncloud.list”

#apt-get update

#wget http://download.opensuse.org/repositories/isv:ownCloud:community/xUbuntu_14.04/Release.key

#apt-key add – < Release.key

#apt-get update

#apt-get install owncloud

put the data directory on the stick and symlink it:

#cd /var/www/owncloud/

#mkdir /media/usbstick/data

#cp -R ./* /media/usbstick/data/

#rm -rf ./data

#ln -s /media/usbstick/data/

Instead of the symlink, you could also edit

config/config.php

in your owncloud directory, and point at the data directory on the usb stick:

‘datadirectory’ => ‘/path/to/your/data’,

At this point, you should have an ownCloud server, reachable on

http:///owncloud

4/ Make it IPv6

Edit your apache’s site configuration

#vi /etc/apache2/sites-enabled

to start with this:

<VirtualHost [2001:760:2e0b:1728:ba27:ebff:fe97:18da]:80>

ServerName your.server.name.org

of course you do this with your IPv6 address, which you can find by doing

#ifconfig

Then restart apache:

#apache2ctl restart

you should now have an IPv6 ownCloud server.

5/ Put this on your home’s DSL (or what your connection might be)

If you do this at home, you might now want to put this on your routers’ DMZ.

Building microscope from old broken mobile phone

Posted on | May 28, 2014 | No Comments

This might not be strictly wireless, but it s a good use of DIY technology anyway: old mobile phones make excellent microscopes, for use in telemedicine (e.g. blood checks), water quality monitoring, etc.

The cost is almost zero – apart from the phone itself, everything can be built from scrap parts.

This model here loosely follows the instructions on http://www.iflscience.com/ & http://www.instructables.com/id/10-Smartphone-to-digital-microscope-conversion/ (thanks!) and was built by Lars Yndal at the PITLab at ITU Copenhagen – http://pit.itu.dk

Click image for larger view.